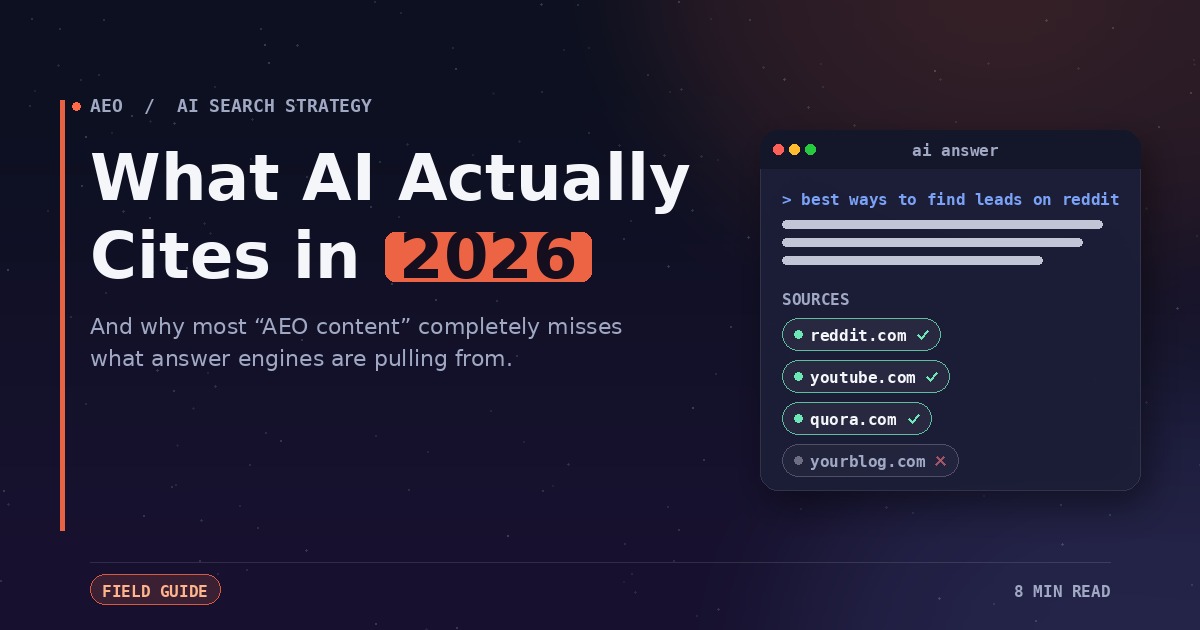

Pull up ChatGPT and ask it something commercial and messy. Best CRM for a four-person startup. Cheapest way to ship from India to Germany. Now look at what it actually cites. It is almost never the brand’s own polished marketing pages. It is almost never the glossy “pillar posts” a content team spent six weeks sanitizing. The citations skew weird: a Reddit thread from 2023, a comparison post on a site you’ve never heard of, or a Substack from one specific guy who actually used the thing.

That gap is the entire AEO (Answer Engine Optimization) conversation right now. Brands keep producing content optimized for a search engine that’s getting fewer queries every quarter, while the answer engines absorbing those queries pull from a completely different layer of the web.

A recent Search Engine Journal piece on Conductor’s upcoming AEO webinar frames this as a discipline question: AEO now sits alongside SEO, with its own formats and signals. That framing is right. Conductor’s 2026 benchmarks show AI Overviews now trigger on over 25% of all searches, nearly double the rate from 2025. But which formats actually earn citations is a more interesting question than most playbooks let on, because the honest answer cuts against current content trends. Here’s what’s actually working.

Why most SEO content doesn’t get cited

Before getting into what works, it is worth being clear about what’s failing. Most content being written for AEO right now is just legacy SEO content with FAQ schema bolted on. It doesn’t work, and the reasons are mechanical, not mystical. When an LLM pulls content into an answer, it isn’t weighing 200 ranking factors. It’s picking from a retrieval set and looking for something that is:

- A direct, complete answer to the prompt

- Structurally easy to lift (a sentence, a list item, a table row)

- Corroborated elsewhere in the index

- Not buried under 800 words of “throat-clearing”

That last one kills more “SEO content” than anything else. A typical pillar post opens with three paragraphs of context-setting. The model has already grabbed something from a Reddit comment that started with “honestly, just use Linear.”

The other killer is brand voice. When the question is “is Tool A better than Tool B,” and Tool A’s own content says it offers “the most comprehensive feature set,” the model treats that the way a person would: Marketing copy, not evidence. It’ll cite the comparison post that says “I switched from B to A in March, here’s what broke.” Specificity and first-hand framing read as more reliable to a model trained on the entire internet’s worth of trust signals.

The formats that actually earn citations

Watching this play out across brand mentions in AI answers—both ones I’ve tracked over time and ones I’ve reverse-engineered for clients—there’s a fairly tight list of formats that consistently get pulled in.

1. Original data and small-scale research

This is high-leverage and underused because it’s harder than rewriting a competitor’s post. When a model sees “73% of B2B buyers said X” alongside a study URL, it can cite that with confidence.

You don’t need a 1,000-respondent panel. A survey of 80 customers on one specific question will outperform a 4,000-word “ultimate guide.” The reason is corroboration: when something is genuinely novel and sourceable, models prefer to attribute it. If you’re a SaaS company and you don’t have at least one annual data report, that’s the highest-ROI piece of AEO work you can commission this quarter. Not because it’ll rank. Because it’ll get cited, and the citation is what brings the next thousand people to know your brand exists.

2. Comparison content with an actual position

Comparison posts get cited disproportionately because of their structure. A clean table (Tool A vs Tool B vs Tool C) is exactly the data shape an LLM needs when someone asks “which of these should I pick.”

But the comparisons that get cited are not the SEO-affiliate variety with 500 words of fluff and a “winner: it depends!” conclusion. The ones that perform take a position. Use A if you have under 20 people. Use B if you need the API. That decisive framing is what gets pulled into an answer. One nuance: third-party comparison content tends to outperform first-party content, but first-party still works if it’s honest. “Here’s where our tool is the wrong choice” is a sentence very few brand pages contain, and that scarcity is exactly what makes it citable.

3. Direct-answer FAQs (not the schema-stuffed kind)

There’s a difference between a useful FAQ and an FAQ schema block stuffed with keyword-targeted questions nobody asked. Real FAQs that get cited give a specific, complete answer in the first sentence without repeating the question to pad word count.

- A version that gets nothing: “What is the best email marketing tool? When it comes to choosing the best email marketing tool, there are many factors to consider…”

- A version that gets cited: “What is the best email marketing tool for small businesses? Mailchimp is the most common pick for small businesses under 2,000 contacts because their free tier covers most basic needs.”

The model can lift the second example directly into an answer. The first version sounds like a brochure.

4. Listicles, but only the opinionated kind

Listicles still work, but the floor has risen. A list of 27 tools is background noise. But a list of 5 tools that includes specific failure modes (“Asana’s timeline view gets sluggish past ~200 tasks”) and a clear opinion about who shouldn’t use each tool, that gets cited. Conductor notes that in 2026, self-serving listicles are “tanking” in favor of locally relevant, expert-led reviews. The differentiator is information density per item. If a competitor could swap their brand name into your post and have it still be true, your post isn’t going to get cited.

5. Expert quote roundups (when the experts are real)

When five practitioners with verifiable backgrounds give specific answers, that piece tends to get cited. Models like multi-source corroboration. The catch is that the experts have to be real. “Content marketing is all about telling stories” gets nothing. “We saw a 40% drop in organic traffic in November and traced it to our author bio pages” is the kind of quote that ends up paraphrased in an answer. If you run a small expert roundup on a question your audience is actually asking, you’ll see citation lift faster than from almost any other investment.

6. Forum and community presence

The citations don’t lie. Reddit threads and niche Discord-adjacent forums show up in AI answers constantly. If your category has an active subreddit and your brand has zero presence there, that’s a citation gap no blog will close. The fix is having actual people answer real questions in those threads, with disclosure. This is slower than blog content, but it is what gets you cited for the commercial queries traditional SEO is losing fastest.

How to produce more without producing slop

The webinar promo gestures at “agentic workflows” for scaling production, and this is where teams make expensive mistakes. Pointing an LLM at your blog and asking it to spin out 50 more pieces in your “brand voice” results in zero citations. You’ve just added to the noise floor.

What does work: using AI agents for research-heavy parts while keeping human editorial judgment for the “take.” AI is good at ingesting transcripts and generating comparison matrices. AI is bad at knowing what’s interesting or writing a first sentence that earns the second.

A production model that holds up:

- Subject matter expert interview, recorded and transcribed.

- AI summarizes the transcript and extracts specific claims.

- Editor picks the angle and outline.

- AI drafts the structural pieces (FAQ, comparison table) using the transcript as source.

- Editor writes the parts that carry the “take” and the stories.

- Fact-check pass before publish.

Roughly four human hours per piece instead of forty. Output triples without citation rate cratering. The shortcut is in the research and structure, not in the writing of the bits that make the piece worth citing in the first place.

What to measure now that traffic is a worse signal

Classic content KPIs (organic sessions, scroll depth) are degrading. Measurement is the actual hard part of AEO. A few metrics that are starting to hold up:

- Citation rate. How often does your brand appear in AI answers for queries you care about? You should know this number for your top 50 commercial queries.

- Branded query volume. Growing branded search in Google, even as overall traffic drops, often means people are encountering your brand in AI answers and then searching for you specifically.

- Conversion rate from AI referrals. Q1 2026 data shows AI referral traffic converts at 14.2%—over five times higher than traditional organic search (2.8%). If your AI-referred traffic isn’t converting better, your content is mismatching intent.

- Share of category mentions. When the answer to “best [your category] tools” is generated, are you in the list? This is the new “ranking position.”

Stop tracking volume of organic clicks for queries that now resolve in an AI answer. Those clicks are not coming back. The question is whether you’re in the answer at all.

The unglamorous part

There’s no hack here. The content that earns AI citations is, almost without exception, the same content that would have been good in 2018: specific, well-sourced, and opinionated. What’s changed is that the floor for “good enough” has risen, because mediocre content no longer ranks its way into a click. It just sits in an index getting averaged into the background noise.

The brands that will own AEO over the next two years are writing fewer, denser pieces and showing up consistently in the communities where their category actually gets discussed. Audit your last quarter of output. If a model has no reason to prefer your version over the next ten versions of the same idea, you have a content strategy problem. Fix the first and the second mostly takes care of itself.